AI-engineered structural materials create super-resolution images using low-resolution displays

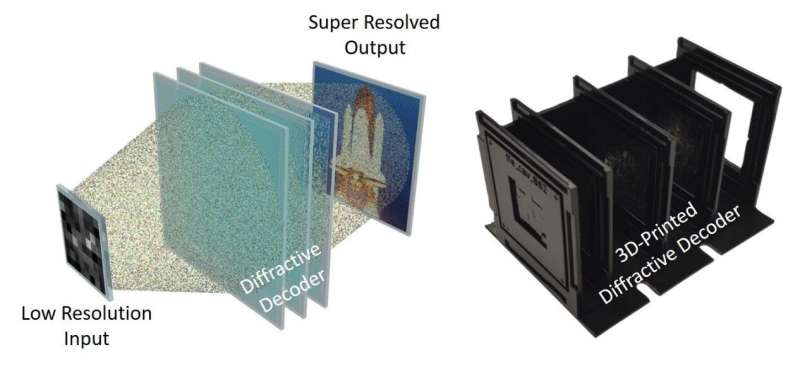

Displays super-resolution images using a diffraction decoder. Credit: Ozcan Labs @ UCLA.

One of the promising technologies being developed for next-generation virtual/augmented reality (AR/VR) systems is holographic rendering that uses light and illumination to simulate optical waves. 3D learning, such as objects in a scene. These holographic displays have the potential to simplify the optical setup of wearable displays, resulting in compact and lightweight form factors.

On the other hand, the ideal AR/VR experience requires relatively High resolution images formed in a large field of view to match the resolution and viewing angle of the human eye. However, the capabilities of three-dimensional projection systems are limited mainly because of the limited number of independently controllable pixels in existing image projectors and spatial light modulators.

A recent study published in scientific advance reported a deep learning engineered streaming material that can project ultra-high-resolution images using a low-resolution image display. In their paper titled “Displaying Super Resolution Images Using Diffraction Decoders,” UCLA researchers, led by Professor Aydogan Ozcan, used deep learning to Spatial design of transmitted diffraction layers at wavelength scale and creation of a material-based physical image decoder that achieves super-resolution image projection as light is passed through its layers.

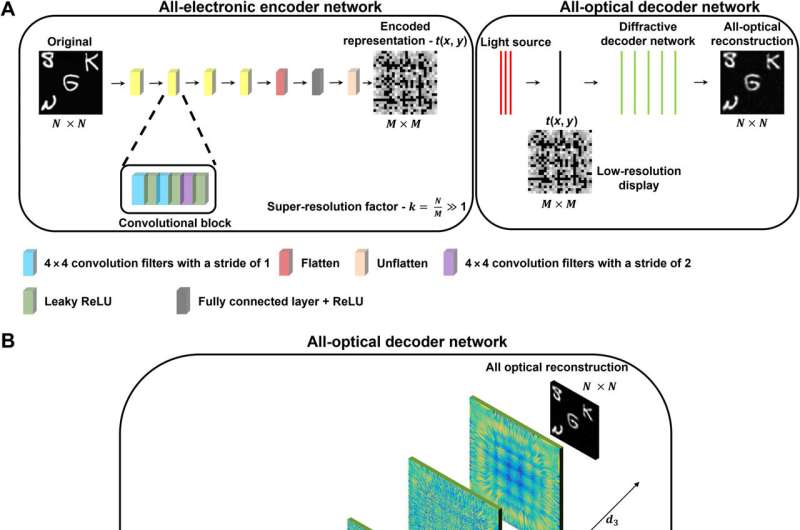

Imagine having a stream of high-resolution images waiting in the cloud or your local PC to be sent to a head-worn or head-worn monitor for your visualization. Instead of sending these high-resolution images to your wearable display, this new technology first runs them through a digital neural network (encoder) to compress them into high-resolution images. lower resolution looks like a barcode, which makes no sense to the human eye.

However, this compressed image is not like other digital Compress images because it is not decrypted or decompressed in the computer. Instead, a transmission material-based diffraction decoder would optically decompress all these lower resolution images and project the desired high resolution images when light from a low-resolution screen pass through thin layers of a diffraction decoder. Thus, decompression of images from low to high resolution is completed using only light diffraction through a thin and passively structured material, making the whole process extremely fast. since a transparent diffraction decoder can be as thin as a stamp.

PSR image display diagram including all-electronic encoder and all-optical decoder. (A) The building blocks of a PSR imaging display consisting of an all-electronic encoder and an all-optical decoder consisting of five layers of diffraction modulation are displayed. An all-electronic encoder network is used to generate low-resolution representations of the input images, which are then super-resolution resolved by a diffraction optical decoder, achieving the desired PSR. (k > 1). (B) Optical layout of the five-layer diffraction decoding network. d1 = 2.667λ, d2 = 66.667λ and d3 = 80λ. ReLU, rectified linear unit. Credit: scientific advance (2022). DOI: 10.1126/sciadv.add3433

In addition to the extremely fast speed, this diffraction image decoding scheme is also energy efficient because the image decompression process obeys light diffraction through the passive material and consumes no power except for illuminant light. .

The UCLA team has shown that these deep learning-designed diffraction decoders can achieve a super-resolution factor of ~4 in each lateral direction of the image, corresponding to the number of useful pixels. The effect in the projected image is increased by 16 times.

In addition to improving the resolution of the projected image, this diffraction image display also greatly reduces the data transmission and storage requirements by encoding high-resolution images into a compact optical representation with a lower pixel count, greatly reducing the amount of information that needs to be transmitted to the wearable display.

The team experimentally demonstrated their ability to visualize diffraction super-resolution images using a 3D printed diffraction decoder that operates in the terahertz portion of the electromagnetic spectrum, commonly used in, for example such as security image scanners at airports. The researchers also report that the super-resolution capabilities of the presented diffraction decoder can be extended to project red, green, and blue wavelength color images.

The study’s principal investigator, Professor Aydogan Ozcan, said: “This diffraction super-resolution image display design will inspire display solutions with enhanced resolution, capable of imaging into the building blocks of the next generation of 3D display technology includes, for example, head-mounted devices.”

Other co-authors of this work include Professor Mona Jarrahi, Northrop Grumman Emeritus Chair of Electrical and Computer Engineering at UCLA, and graduate students Cagatay Isil, Deniz Mengu, Yifan Zhao, Anika Tabassum, Jingxi Li and Yi Luo, all from UCLA.

Çağatay Işıl et al, Displaying super-resolution images using a diffraction decoder, scientific advance (2022). DOI: 10.1126/sciadv.add3433

quote: AI-designed structured material generates super high-resolution images using low-resolution displays (2022, December 5) retrieved December 5, 2022 from https ://techxplore.com/news/2022-12-ai-design-material-super-resolution-image-low-res.html

This document is the subject for the collection of authors. Other than any fair dealing for private learning or research purposes, no part may be reproduced without written permission. The content provided is for informational purposes only.